“Grit in the Gears” Costs Energy in Modular Information Systems

Digital information may appear to exist as abstract ones and zeroes, flipping effortlessly from one to another. But in fact there is a minimum amount of energy required to run any computation system, regardless of how “energy efficient” are its component parts. A recent paper from physics professor Jim Crutchfield and Alex Boyd (Ph.D., physics, '18) at the UC Davis Complexity Sciences Center, with Dibyendu Mandal at UC Berkeley, shows that there is some inescapable friction, or “grit in the gears” between the levels of organization in an information system.

In 1961, physicist Rolf Landauer showed that however you do it, erasing data costs energy. “Landauer’s Principle” sets a minimum amount of energy required to run a computer.

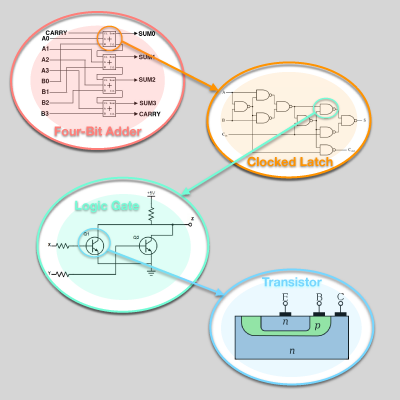

In their paper, Crutchfield, Boyd and Mandal point out that modern computers are organized in modules that break computation up into multiple steps. These modules are themselves organized in hierarchies: transistors are organized in logic gates, that are organized in circuits and so on. What effect, they asked, do these compartments and layers of organization have on the energy cost of managing information?

To work efficiently these modules have to be able to store information about the modules that surround them and the levels above and below. Energy is lost when modules are not able to store this information about the complexity of the environment around them, the researchers said.

That means that there is an irreducible amount of “friction” between layers in a compartmentalized system, over and above the Landauer limit. This can be reduced by good design, but not overcome entirely.

The findings have implications beyond computing, Crutchfield said. Biological systems are organized into compartments: Organelles within cells, cells within tissues and tissues into organs. The neurons in our brains are organized in hierarchies of circuits.

The work is published in the journal Physical Review X.

— Andy Fell, UC Davis News and Media Relations